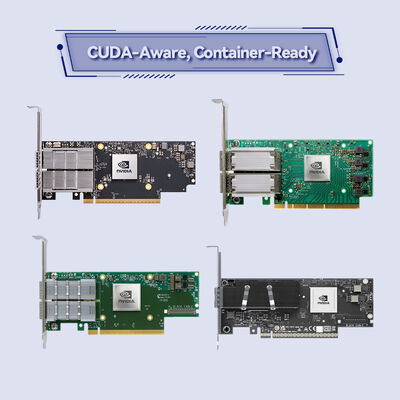

NVIDIA ConnectX-6 InfiniBand Adaptörü MCX653106A-ECAT 200Gb/s Akıllı NIC

Ürün ayrıntıları:

| Marka adı: | Mellanox |

| Model numarası: | MCX653106A-EEAT |

| Belge: | connectx-6-infiniband.pdf |

Ödeme & teslimat koşulları:

| Min sipariş miktarı: | 1 adet |

|---|---|

| Fiyat: | Negotiate |

| Ambalaj bilgileri: | Dıştaki kutu |

| Teslim süresi: | Envantere dayalı |

| Ödeme koşulları: | T/T |

| Yetenek temini: | Proje/Parti ile Tedarik |

|

Detay Bilgi |

|||

| Ürün durumu: | Stoklamak | Başvuru: | Sunucu |

|---|---|---|---|

| Arayüz Türü:: | İnfiniband | Limanlar: | Çift |

| Maksimum Hız: | 100GBE | Tip: | kablolu |

| Durum: | Yeni ve orijinal | Garanti süresi: | 1 Yıl |

| Modeli: | MCX653106A-EEAT | İsim: | Mellanox Ağ Kartı Orijinal MCX653106A-ECAT connect X-6 100 Gb/s çift bağlantı noktalı QSFP56 Etherne |

| Anahtar kelime: | Mellanox ağ kartı | ||

| Vurgulamak: | NVIDIA ConnectX-6 Infiniband adaptörü,200Gb/s Akıllı NIC,Garantili Mellanox ağ kartı |

||

Ürün Açıklaması

200Gb/s Çift Bağlantı Noktalı Akıllı Adaptör, Ağ İçi Hesaplama Özellikli

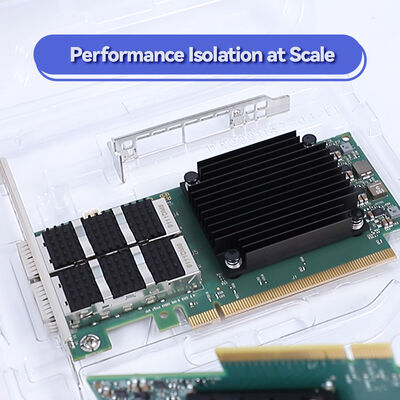

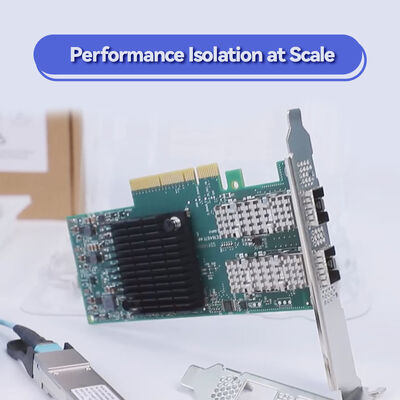

NVIDIA ConnectX-6 MCX653106A-ECAT, şunları sunar: 200Gb/s bant genişliği, mikrosaniye altı gecikme süresi ve HPC, AI ve hiper-birleşik depolama için donanım boşaltmaları. RDMA, NVMe-oF hızlandırması, blok düzeyinde XTS-AES şifrelemesi ve PCIe 4.0 özelliklerine sahip bu çift bağlantı noktalı QSFP56 InfiniBand adaptörü, veri merkezi verimliliğini ve ölçeklenebilirliğini en üst düzeye çıkarır. GPU kümeleri, ML eğitimi ve kritik görev ağları için idealdir.

MCX653106A-ECAT en zorlu iş yükleri için tasarlanmış NVIDIA ConnectX-6 InfiniBand adaptör ailesinin bir parçasıdır. 200Gb/s InfiniBand veya 200Gb/s Ethernet bağlantısı yapabilen iki adet QSFP56 bağlantı noktası birleştirerek, donanım tabanlı güvenilir aktarım, tıkanıklık kontrolü ve Ağ İçi Hesaplama motorları sunar. Kolektif işlemleri, MPI etiket eşleştirmeyi ve şifrelemeyi ana bilgisayar CPU'sundan boşaltarak, adaptör CPU yükünü azaltır ve büyük ölçekli kümelerde uygulama performansını artırır. Kurumsal, araştırma laboratuvarları ve büyük ölçekli veri merkezleri, enerji verimli, düşük gecikmeli ağlar oluşturmak için ConnectX-6'ya güvenir.

Bağlantı başına 200Gb/s'ye kadar (HDR InfiniBand / 200GbE)

Saniyede 215 milyona kadar mesaj

Blok düzeyinde XTS-AES 256/512-bit, FIPS uyumlu

Kolektif boşaltmalar, NVMe-oF hedef/başlatıcı boşaltmaları

PCIe Gen 4.0/3.0 x16 (çift bağlantı noktası desteği)

SR-IOV 1K VF'ye kadar, ASAP2, Open vSwitch boşaltma

RoCE, XRC, DCT, İsteğe Bağlı Sayfalama, Uyarlanabilir Yönlendirme desteği

Dik PCIe (düşük profilli), çift bağlantı noktalı QSFP56

NVIDIA'nın kanıtlanmış InfiniBand mimarisi üzerine kurulu ConnectX-6, Ağ İçi Hesaplama özelliğini MPI işlemlerini, derin öğrenme çerçevelerini ve depolama protokollerini hızlandırmak için entegre eder. Adaptör, sıfır kopyalama veri aktarımları için Uzaktan Doğrudan Bellek Erişimi (RDMA) özelliğini destekleyerek CPU ve çekirdeği atlar. Donanım tabanlı tıkanıklık kontrolü, yoğun yük altında bile öngörülebilir performansı garanti eder. Ek olarak, NVIDIA GPUDirect RDMA GPU belleği ile ağ adaptörü arasında doğrudan veri alışverişine izin vererek AI eğitimi için gecikmeyi önemli ölçüde azaltır. Fabrikalar Üzerinden NVMe (NVMe-oF) boşaltmalarını destekleyerek, kart depolama dizilerinde CPU kullanımını azaltırken yüksek verimli, düşük gecikmeli NVMe flash erişimi sağlar.

- Yüksek Performanslı Hesaplama (HPC): Düşük gecikme süresi ve yüksek bant genişliği gerektiren büyük ölçekli simülasyonlar, hava modelleme ve hesaplamalı akışkanlar dinamiği.

- Yapay Zeka ve Makine Öğrenmesi Kümeleri: Maksimum verimlilik için GPUDirect ve RDMA'dan yararlanan derin sinir ağlarının dağıtılmış eğitimi.

- NVMe-oF Depolama Sistemleri: Hedef veya başlatıcı boşaltmaları, düşük CPU yüküyle yüksek performanslı ayrılmış depolama sağlar.

- Büyük Ölçekli Veri Merkezleri: SR-IOV, katmanlı ağlar ve hizmet zincirleme ile sanallaştırılmış ortamlar.

- Finansal Hizmetler: Deterministik performans gerektiren ultra düşük gecikmeli ticaret altyapısı.

ConnectX-6 MCX653106A-ECAT, çok çeşitli sunucular, anahtarlar ve işletim sistemleriyle uyumludur. NVIDIA Quantum InfiniBand anahtarları (HDR 200Gb/s) ve 200GbE Ethernet anahtarlarıyla birlikte çalışır. Adaptör, standart PCIe yuvalarını (x16, x8, x4) destekler ve büyük işletim sistemi platformları için sürücü desteği içerir.

| Parametre | Özellik |

|---|---|

| Ürün Modeli | MCX653106A-ECAT |

| Veri Hızı | 200Gb/s, 100Gb/s, 50Gb/s, 40Gb/s, 25Gb/s, 10Gb/s, 1Gb/s (InfiniBand ve Ethernet) |

| Bağlantı Noktaları | 2x QSFP56 konektör |

| Ana Bilgisayar Arayüzü | PCIe Gen 4.0 / 3.0 x16 (x8, x4, x2, x1 yapılandırmalarını destekler) |

| Gecikme Süresi | Son derece düşük mikrosaniye altı (tipik <0.8µs) |

| Mesaj Hızı | Saniyede 215 Milyon Mesaja Kadar |

| Şifreleme | XTS-AES 256/512-bit, FIPS 140-2 uyumluluğu hazır |

| Form Faktörü | PCIe düşük profilli dik (yüksek braket takılı, kısa braket dahil) |

| Boyutlar (braket olmadan) | 167.65mm x 68.90mm |

| Güç Tüketimi | Tipik 22W (trafiye bağlı olarak) |

| Sanallaştırma | SR-IOV (1K VF), VMware NetQueue, NPAR, ASAP2 akış boşaltma |

| Yönetim | NC-SI, PCIe/SMBus üzerinden MCTP, firmware güncellemesi ve izleme için PLDM |

| Uzaktan Önyükleme | InfiniBand, iSCSI, PXE, UEFI |

| İşletim Sistemleri | RHEL, SLES, Ubuntu, Windows Server, FreeBSD, VMware vSphere, OFED yığını |

| Sipariş Parça Numarası (OPN) | Bağlantı Noktaları | Maksimum Hız | Ana Bilgisayar Arayüzü | Anahtar Farklılaştırıcı |

|---|---|---|---|---|

| MCX653106A-ECAT | 2x QSFP56 | 100Gb/s (daha düşük hızlar da mevcut) | PCIe 3.0/4.0 x16 | Çift bağlantı noktalı 100GbE/IB, gelişmiş boşaltmalar, isteğe bağlı şifreleme? Bu varyantta yerleşik şifreleme yok, ancak yazılım aracılığıyla blok şifrelemeyi destekliyor mu? Aslında donanım AES motoru, spesifikasyona bakın; sanallaştırma ve depolama için ideal |

| MCX653105A-HDAT | 1x QSFP56 | 200Gb/s | PCIe 3.0/4.0 x16 | Tek bağlantı noktalı 200Gb/s, şifreleme desteği |

| MCX653106A-HDAT | 2x QSFP56 | 200Gb/s | PCIe 3.0/4.0 x16 | Çift bağlantı noktalı 200Gb/s tam bant genişliği, şifreleme boşaltma |

| MCX653105A-ECAT | 1x QSFP56 | 100Gb/s | PCIe x16 | Tek bağlantı noktalı 100Gb/s, daha düşük maliyetli giriş |

| MCX653436A-HDAT (OCP 3.0) | 2x QSFP56 | 200Gb/s | PCIe 3.0/4.0 x16 | OCP 3.0 küçük form faktörü, çift bağlantı noktalı |

- Maksimum Uygulama Performansı: MPI, NVMe-oF ve şifreleme için donanım boşaltmaları, CPU çekirdeklerini gerçek iş yükleri için serbest bırakır.

- Geleceğe Hazır Bant Genişliği: PCIe 4.0 ve 200Gb/s hazırlığı, yüksek hızlı ağlarda uzun ömür sağlar.

- Ağ İçi Bellek ve Hesaplama: Veri taşıma yükünü azaltan kolektif boşaltmaları ve anlık tamponu destekler.

- Güvenilir Güvenlik: FIPS uyumlu blok düzeyinde AES-XTS şifrelemesi, performans kaybı olmadan verilerin hem depolanırken hem de aktarılırken korunmasını sağlar.

- Basitleştirilmiş Yönetim: Geniş OS ve hipervizör desteği, birleşik sürücü yığını (OFED, WinOF-2) ile.

Hong Kong Starsurge Group, tüm NVIDIA ConnectX adaptörleri için tam teknik destek, garanti kapsamı ve RMA hizmetleri sunmaktadır. Ağ mühendislerinden oluşan ekibimiz yapılandırma, firmware güncellemeleri ve performans ayarı konusunda yardımcı olur. Küresel nakliye, veri merkezi projeleri için toplu fiyatlandırma ve özelleştirilmiş stok rezervasyonları sunuyoruz. Toplu siparişler için, özel fiyat teklifleri ve teslim süresi ayrıntıları almak üzere satış ekibimizle iletişime geçin.

• PCIe yuvasının yeterli güç sağladığını onaylayın (yuva üzerinden 75W'a kadar; bu adaptör tipik olarak <25W).

• Sıvı soğutmalı platformlar için, soğuk plaka varyantı gerekliyse Intel Server System D50TNP ile uyumluluğu kontrol edin (bu OPN standart hava soğutmalıdır).

• OS sürücü uyumluluğunu en son OFED veya WinOF-2 yığınlarıyla doğrulayın.

2008'den bu yana Hong Kong Starsurge Group Co., Limited, dünya çapında kurumsal düzeyde ağ donanımı, sistem entegrasyonu ve BT hizmetleri sunmaktadır. NVIDIA ağ ürünleri için güvenilir bir ortak olarak Starsurge, hükümet, finans, sağlık, eğitim ve büyük ölçekli veri merkezleri için sertifikalı çözümler sunmaktadır. Teknik ekibimiz, satış öncesi mimari tasarımından satış sonrası desteğe kadar sorunsuz dağıtım sağlar. Müşteri odaklı bir felsefeyle, NIC'ler, anahtarlar, kablolar ve uçtan uca ağ çözümleri dahil olmak üzere özel, ölçeklenebilir altyapı bileşenleri sunuyoruz.

Küresel teslimat · Çok dilli destek · OEM hizmetleri mevcut

| Bileşen / Ekosistem | Desteklenen | Notlar |

|---|---|---|

| NVIDIA Quantum HDR Anahtarları | ✓ Evet | 200Gb/s tam ağ entegrasyonu |

| Ethernet 200G/100G Anahtarları | ✓ Evet | Uyumlu alıcı-verici/FEC modları gerektirir |

| GPU Direct RDMA | ✓ Evet | Desteklenen NVIDIA GPU serileri |

| VMware vSphere / ESXi | ✓ Sertifikalı | Yerel sürücüler, SR-IOV desteği |

| Windows Server 2019/2022 | ✓ Evet | WinOF-2 sürücü paketi |

| Linux Çekirdeği ve OFED | ✓ Tam destek | MLNX_OFED, yerleşik sürücüler |

- Gerekli bağlantı hızını onaylayın: 100Gb/s çift bağlantı noktası küme bant genişliği planınızı karşılıyor mu? 200Gb/s çift bağlantı noktası için -HDAT OPN'yi göz önünde bulundurun.

- Sunucu PCIe yuvasını doğrulayın: x16 fiziksel, Gen 3 veya Gen 4 önerilir.

- Kablo türünü kontrol edin: QSFP56 pasif bakır (5m'ye kadar) veya daha uzun mesafeler için aktif optik kablolar.

- İşletim sistemi sürücülerinin mevcut olduğundan emin olun (OFED, WinOF).

- Şifreleme gereksinimleri için: yerleşik blok şifrelemenin gerekip gerekmediğini onaylayın – MCX653106A-ECAT AES-XTS'yi destekler, ancak her zaman FIPS seviyesini NVIDIA veri sayfasından onaylayın.

- Sanallaştırma ihtiyaçlarını değerlendirin: SR-IOV, VXLAN boşaltma vb.